Introduction

Human Touch in AI Governance isn’t a nice-to-have; it’s the very bedrock of trust in our automated future. Have you ever interacted with a customer service chatbot that left you feeling frustrated and utterly unheard? I have. Just last week, I spent 20 minutes in a circular conversation with an AI that couldn’t grasp the nuance of my problem. That moment, a tiny slice of daily life, crystalized a massive corporate truth: without a guiding human touch in AI governance, even the most sophisticated systems fail us where it matters most.

We’re at a crossroads where boardroom decisions on artificial intelligence will define brand legacies and societal impact. This guide is your blueprint for weaving irreplaceable human judgment, ethics, and empathy into the fabric of your AI strategy.

The Invisible Crisis: When AI Governance Lacks a Human Pulse

I once sat in on a presentation where a tech executive proudly unveiled a new hiring algorithm. The metrics for speed and resume processing volume were staggering. Yet, when a board member quietly asked, “How do we know it isn’t inadvertently filtering out non-traditional candidates?” the room fell silent. The team had optimized for efficiency, but not for equity.

This is the precise gap that a dedicated human touch in AI governance is designed to fill. It’s the practice of asking the uncomfortable questions that data alone cannot answer.

Governance isn’t about stifling innovation; it’s about steering it with wisdom. An AI model predicting inventory needs might be mathematically flawless, but could it foresee the reputational damage of stockpiling a resource suddenly seen as unethical? That requires a contextual, human understanding of the world.

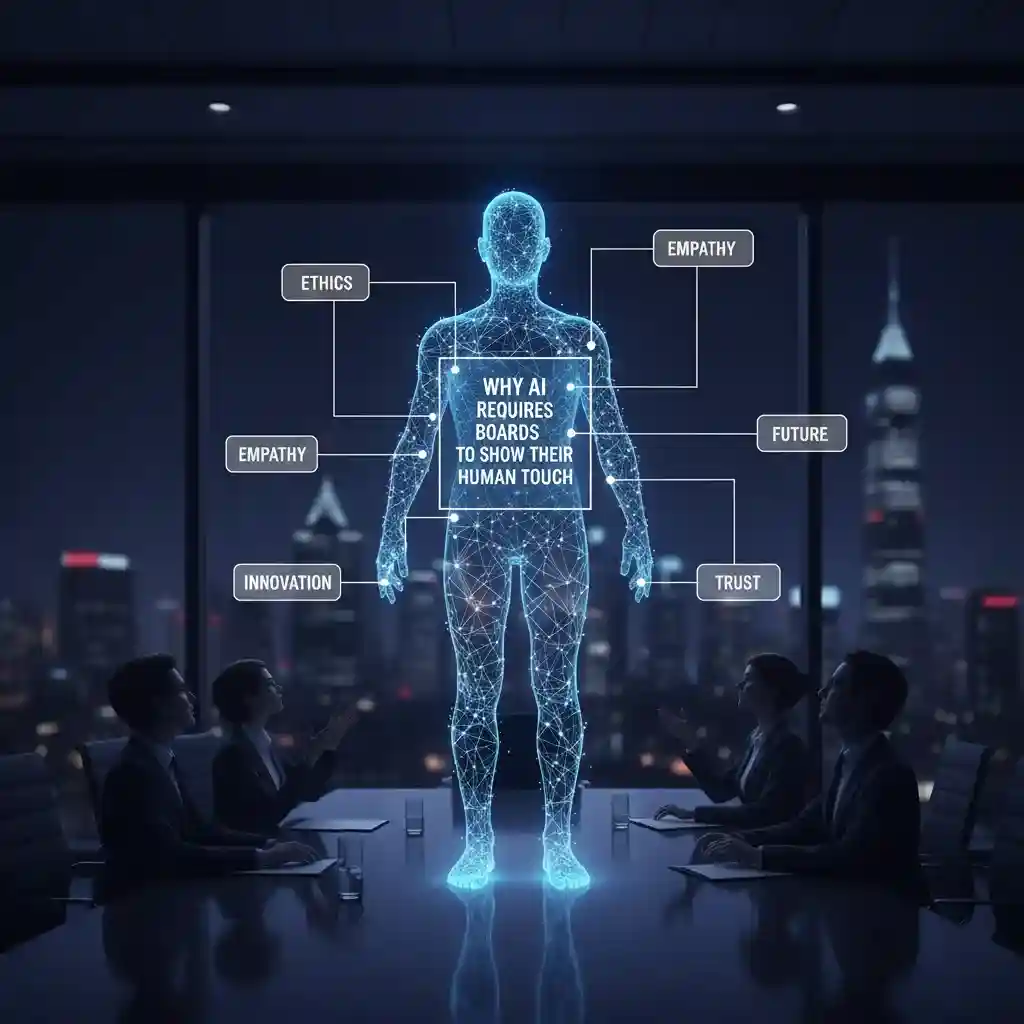

Proven Framework: Embedding the Human Touch in AI Governance

To move from theory to practice, boards need a structured approach. This is where moving the human touch in AI governance from a philosophical concept to an operational checklist becomes critical.

Step 1 – Establish an AI Ethics Committee with Real Teeth

Forming a committee is easy; empowering it is the challenge. This shouldn’t be a ceremonial panel. It must include diverse voices: legal, compliance, HR, customer-facing roles, and even an ethicist or sociologist. Their mandate? To conduct regular “human touch in AI governance” audits. For example, before launching a new customer-facing AI, this committee should run scenario tests. How does the AI handle a grieving customer? A user with a complex, multi-issue complaint? These are tests of empathy that require a human touch in AI governance oversight.

Step 2 – Map AI Decisions to Human Impact

Every significant AI system should have a “Human Impact Statement.” This document, reviewed by the board, doesn’t just look at ROI. It asks: Who is affected? What biases could emerge? What is the potential for erosion of trust? I recall a retail brand that used AI to optimize store layouts. The algorithm brilliantly maximized sales per square foot but created layouts that were inaccessible for some disabled customers. A human touch in AI governance review would have caught this by centering the human experience, not just the financial output.

Step 3 – Implement Continuous Human-in-the-Loop Checkpoints

For high-stakes decisions—loan approvals, medical triage suggestions, content moderation—the system must be designed to escalate uncertainty to a human. This “human-in-the-loop” model is the most direct application of the human touch in AI governance. It acknowledges that some decisions require moral reasoning, compassion, or an understanding of exceptional circumstances that AI cannot yet grasp.

Why Compassion is Your New Competitive Advantage

Let’s be honest: consumers and employees are increasingly skeptical of faceless automation. They crave authenticity. A brand that can demonstrably say, “Our AI is guided by human ethics,” builds immense loyalty. This strategic human touch in AI governance becomes a shield against backlash and a magnet for talent. A study by MIT Sloan underscores that companies prioritizing ethical AI see stronger long-term stakeholder trust.

Think about it. When two companies offer similar products, would you choose the one with a notorious, unfeeling AI chatbot, or the one known for thoughtful, human-backed technology? The choice is clear. This human touch in AI governance directly translates to brand equity and market resilience.

The Non-Negotiables: A Board’s Checklist for Human-Centric AI

To make this tangible, here is a core list of actions every board should champion. This checklist is where your human touch in AI governance gets its marching orders.

- Mandate transparency for all customer-facing AI systems, ensuring users know when they are interacting with automation—a fundamental human touch in AI governance principle of respect.

- Require regular bias audits conducted by third parties, not just internal teams, to ensure algorithmic fairness.

- Invest in training for employees who manage or work alongside AI, empowering them to provide the crucial human touch in AI governance at the operational level.

- Create clear and accessible channels for reporting concerns about AI outputs, fostering a culture of ethical vigilance.

- Publicly articulate your company’s AI ethics principles, making your commitment to the human touch in AI governance a public promise.

Navigating the Pitfalls: Common Mistakes in Human AI Oversight

Many boards fall into the trap of delegating AI oversight entirely to the IT or data science committees. This is a critical error. Technology teams are rightly focused on feasibility, performance, and innovation. The human touch in AI governance must come from a separate, elevated function that asks “should we?” not just “can we?”. Another mistake is treating ethics as a one-time compliance box to tick. In reality, maintaining the human touch in AI governance is an ongoing, iterative process of learning and adaptation.

I honestly wish more boards had learned this earlier: treating AI governance as a purely technical exercise is the fastest path to public mistrust and regulatory penalty.

Your Call to Action: Leading with Humanity

So, what’s the first move? Start a conversation at your next board meeting. Ask the simple, powerful question: “Where in our AI initiatives is the human touch in AI governance most visibly absent?” The answers will light your path forward.

This journey isn’t about being anti-technology; it’s about being pro-humanity in the age of technology. It’s about ensuring that as we build smarter machines, we don’t outsource our wisdom, our ethics, or our compassion.

Conclusion

Ultimately, the human touch in AI governance is the safeguard of our collective future. It’s the quiet voice in the boardroom that champions dignity over mere efficiency, and long-term trust over short-term gain. As you reflect on your organization’s path, ask yourself: Will our AI be remembered for its cold precision, or for its enlightened, human-guided purpose? The power to choose rests squarely with today’s leaders.

Ready to explore? Find your next read over on the BlogTime homepage. We’re glad you’re here. For further reading on ethical frameworks, a recent report from Forbes offers excellent insights into global trends.

FAQs: The Human Touch in AI Governance

- Why is the “human touch in AI governance” considered non-negotiable?

The human touch in AI governanceis essential because AI systems lack innate human qualities like ethical reasoning, empathy, and contextual understanding. It serves as a critical safeguard against bias, ethical blind spots, and decisions that might be logically sound but socially or morally harmful. - How can a board practically demonstrate a human touch in AI governance?

Boards can demonstrate this by establishing a dedicated AI ethics committee, mandating regular “human impact” audits for AI projects, and ensuring there are clear channels for human oversight and intervention in high-stakes automated decisions. - Doesn’t adding a human touch in AI governance slow down innovation and efficiency?

On the contrary, it future-proofs innovation. While it may require more upfront diligence, a strong human touch in AI governanceprevents costly missteps, builds public trust, and avoids regulatory penalties, ultimately ensuring smoother, more sustainable implementation of AI technologies. - Who is responsible for providing the human touch in AI governance within an organization?

While ultimate accountability rests with the board of directors, the responsibility is shared. It requires collaboration between the board, a cross-functional ethics or governance committee, executive leadership, and the developers and managers who work with the AI systems daily. - Can you give a concrete example of a failed project that lacked a human touch in AI governance?

Many high-profile AI failures, such as biased hiring tools or discriminatory loan algorithms, trace their root cause to this gap. These systems were often built and deployed with a focus on technical performance and efficiency, without sufficient human touch in AI governanceto question the training data, objective functions, and potential for societal harm.